SCALING B2B EXPERIMENTATION

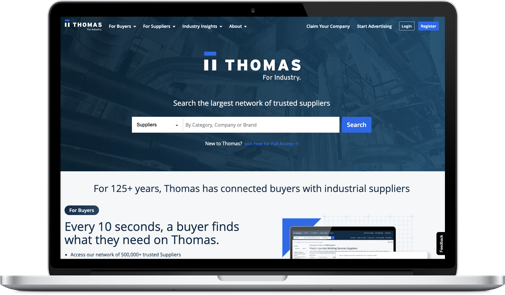

THOMAS.COM/XOMETRY

OVERVIEW

Thomas, a Xometry company, sold experimentation packages to hundreds of B2B clients but lacked the design capacity to deliver them at scale. I was brought in to stand up and run the experimentation program, designing a repeatable, research-driven system to improve usability, clarity, and conversion across complex industrial websites.

The program produced a 61.7% experiment win rate, shipped reusable design patterns, and helped clients build trust with technical buyers through clearer information, better taxonomy, and credibility-driven messaging..

PROJECT SCOPE

2022 – 2024

TL;DR

PROBLEM

Thomas, a Xometry company, sold experimentation packages to hundreds of B2B clients but did not have the design capacity or structure to deliver the work consistently. Experimentation was fragmented, difficult to scale, and often focused on surface-level changes rather than meaningful usability improvements.

APPROACH

I treated experimentation as a product design system, not a series of isolated tests. The goal was to create a repeatable, research-driven framework that could identify real user problems, validate design decisions, and scale learnings across many complex B2B sites.

WHAT I DID

I stood up and ran the experimentation program, combining quantitative analysis, moderated user testing, and established B2B UX best practices to form clear hypotheses. I designed and validated larger, intentional experiments, avoided low-impact and intrusive patterns, and focused on reusable solutions that improved clarity, trust, and decision-making for technical buyers.

IMPACT

The program achieved a 61.7% experiment win rate and led to sustained improvements in add-to-cart behavior, conversion, average order value, and revenue per visitor, without increasing reliance on promotions or discounts.

WHY IT MATTERS

The gains held over time, showing that the work addressed underlying product and usability issues rather than short-term optimizations, while giving Thomas a scalable way to deliver experimentation across its B2B client portfolio

TOOLS

INDEX:

01. Role / Team / Timeline / Constraints

02. Context → Problem → Why it matters

03. Signals (quant + qual) → Key insight(s)

04. Hypotheses & Experiments

05. Key decisions

06. Results & What Shipped

01 | ROLE / TEAM / TIMELINE / CONSTRAINTS

Role

Product Designer (Growth & Experimentation)

Team

Thomas (a Xometry company), internal program stakeholders, client teams

Timeline

Multi-quarter engagement

Overview

Thomas, a Xometry company, managed hundreds of B2B websites for industrial and manufacturing clients. One of its offerings was conversion rate optimization and experimentation. After selling experimentation packages at scale, the team lacked the design and delivery capacity to execute the work consistently.

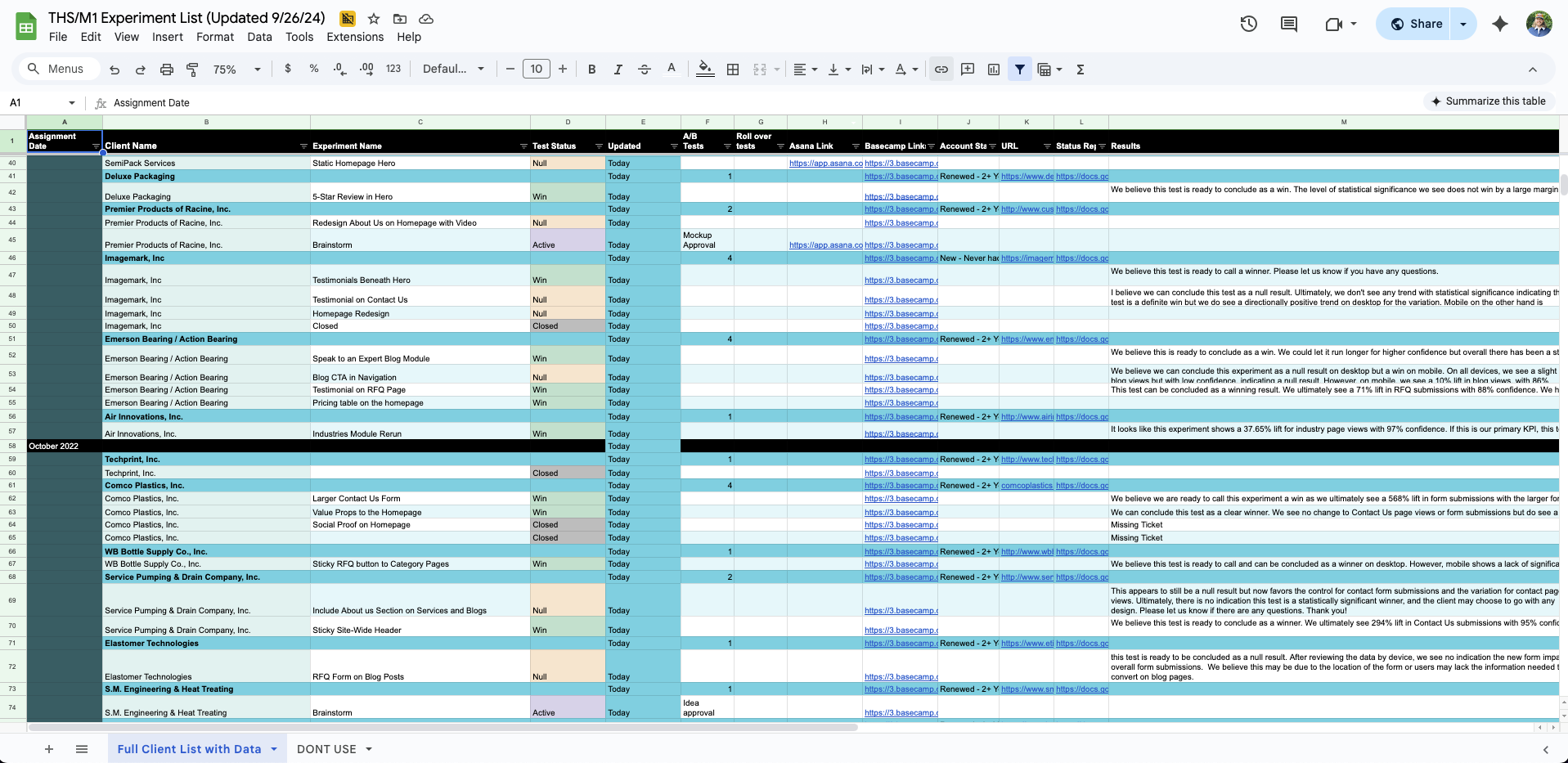

I was brought in to stand up and run the experimentation program across multiple B2B clients. My role was to design a repeatable workflow for identifying opportunities, designing solutions, validating ideas through experimentation, and supporting implementation across sites with different audiences, constraints, and technical setups.

This work focused on building a scalable system for experimentation-led product improvements rather than optimizing any single site in isolation.

Constraints & Considerations

Hundreds of B2B clients across different industries and site architectures

Technical buyers with long sales cycles and complex decision-making

Limited internal resources and varying levels of analytics maturity

Live production environments where changes carried real risk

02 | CONTEXT → PROBLEM → WHY IT MATTERS

Context

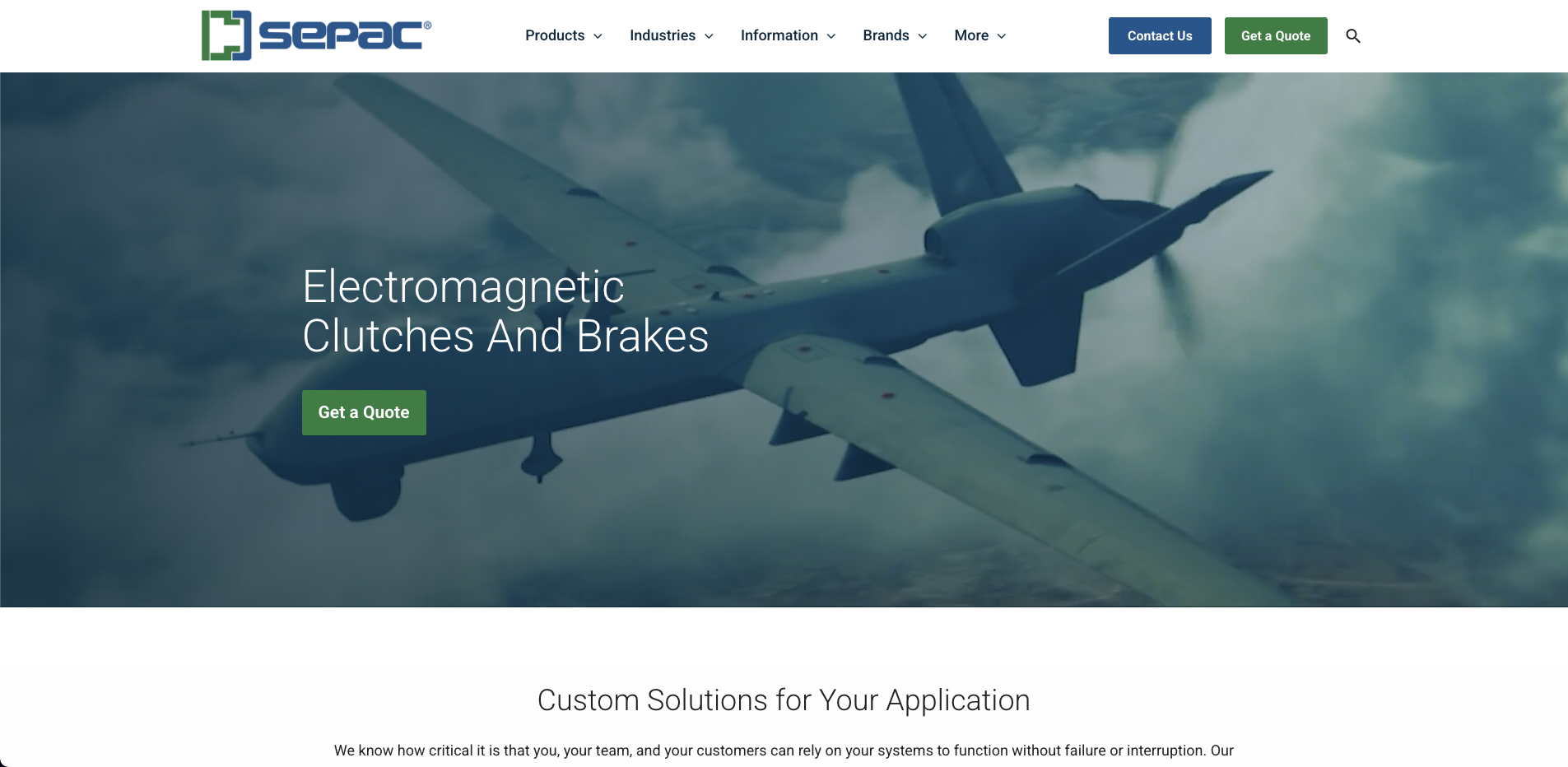

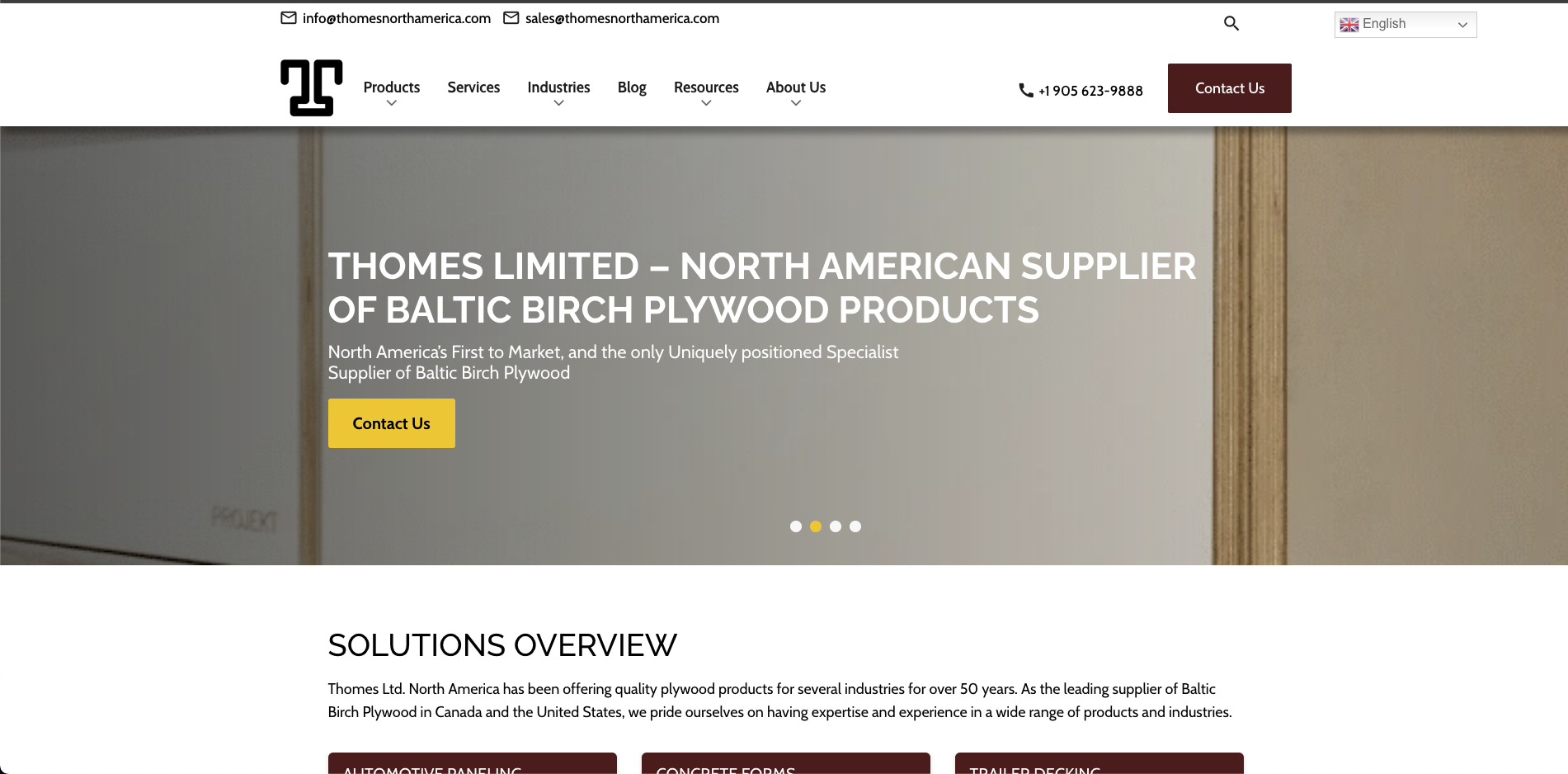

Thomas supported hundreds of B2B clients across industrial and manufacturing sectors, each with different products, audiences, and technical constraints. While these businesses varied widely, many shared a common goal: turning high-intent traffic into qualified leads or purchases through complex, information-dense websites.

Experimentation was positioned as a way to improve performance, but without a shared framework, teams were approaching optimization inconsistently. Efforts often focused on surface-level changes rather than addressing deeper usability and clarity issues that mattered to technical buyers.

Problem

B2B users were not failing to convert because of a lack of interest. They struggled because key information was unclear, difficult to find, or presented too late in the experience. This led to hesitation, confusion, and drop-off at critical decision points.

At the same time, Thomas needed a way to deliver experimentation work across many clients without relying on one-off solutions or subjective design decisions. Without structure, experimentation was slow, hard to scale, and difficult to tie back to meaningful product improvements.

Why It Matters

For technical B2B buyers, trust and clarity are more important than persuasion. If the product experience does not clearly communicate value, requirements, and next steps, even highly motivated users will disengage.

Solving this problem meant more than improving conversion metrics. It required a repeatable, design-led approach to experimentation that could scale across clients, reduce risk, and consistently improve usability in complex B2B environments.

03 | SIGNALS & INSIGHTS

To understand where experimentation could have the most impact, I looked across a mix of quantitative and qualitative signals from multiple B2B client sites. Rather than treating each site in isolation, the goal was to identify patterns that showed up consistently across industries and audiences.

Key Signals

Funnel and page-level analytics showing repeated drop-offs at similar moments in the user journey

Session recordings and heatmaps revealing hesitation, backtracking, and repeated interactions with the same elements

On-site feedback, support questions, and sales insights pointing to confusion around pricing, requirements, and next steps

Prior test results that showed inconsistent or short-lived wins when changes focused on surface-level optimizations

Backlog of Experiments

Insights

Across clients, users were disengaged when they could not quickly understand whether a product or service fit their needs.

Several patterns emerged:

Important details were often buried too deep in the experience

Users reached decision points before they felt confident moving forward

Small UI changes produced noise, but clarity-driven changes produced more durable impact

The most reliable opportunities were not tied to specific layouts or industries, but to moments where users needed clearer information, stronger reassurance, or a better sense of what would happen next.

These insights shaped how hypotheses were formed and why experiments focused on improving clarity, trust, and decision-making rather than cosmetic changes.

04 | HYPOTHESIS & EXPERIMENTS

The goal of experimentation in this program was not to make small cosmetic changes. Traffic levels across many B2B clients meant we needed to make more meaningful updates that could answer real questions about user behavior. That required grouping related changes into larger, intentional experiments rather than relying on minor iterative tweaks.

How hypotheses were formed

Hypotheses were based on a combination of quantitative data, moderated user testing, and established UX best practices for B2B eCommerce and lead generation. I regularly referenced research from sources like NN/g, Baymard, and Gartner, then paired those patterns with client-specific analytics to identify where experiences were clearly broken or ambiguous. Those moments became candidates for experimentation.

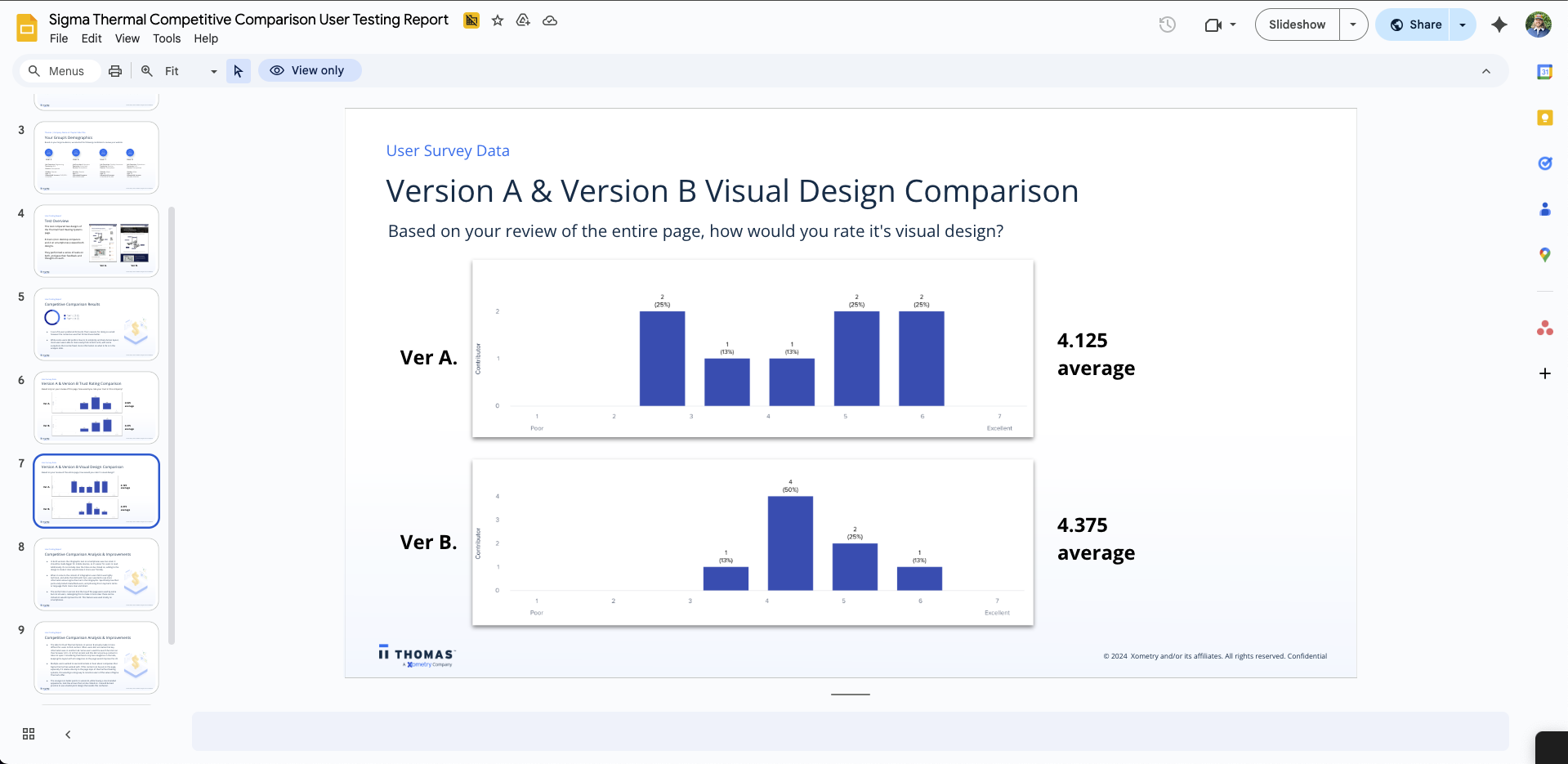

Early in the program, I pushed for more moderated user testing to better understand each client’s audience. Findings from those sessions were grouped into recurring themes, which helped determine where experimentation would have the most impact and allowed hypotheses to generalize across multiple clients.

Examples of Client Sites

Design intent and constraints

Certain types of experiments were intentionally avoided. We did not focus on new hero imagery, minor visual tweaks, or small color changes. We also avoided adding popups, chatbots, or persistent floating CTAs, as these often created noise without addressing core user needs.

Experiments had to respect technical accuracy, legal requirements, and brand credibility. For example, request-a-quote forms placed directly on product pages were often tested and found to be too early for users who needed more trust and context first.

Learning through experimentation

Not every experiment was expected to win. Some tests were designed to answer open questions, such as whether richer video content could improve understanding despite potential performance risks. In several cases, these experiments proved valuable and performed well for specific clients.

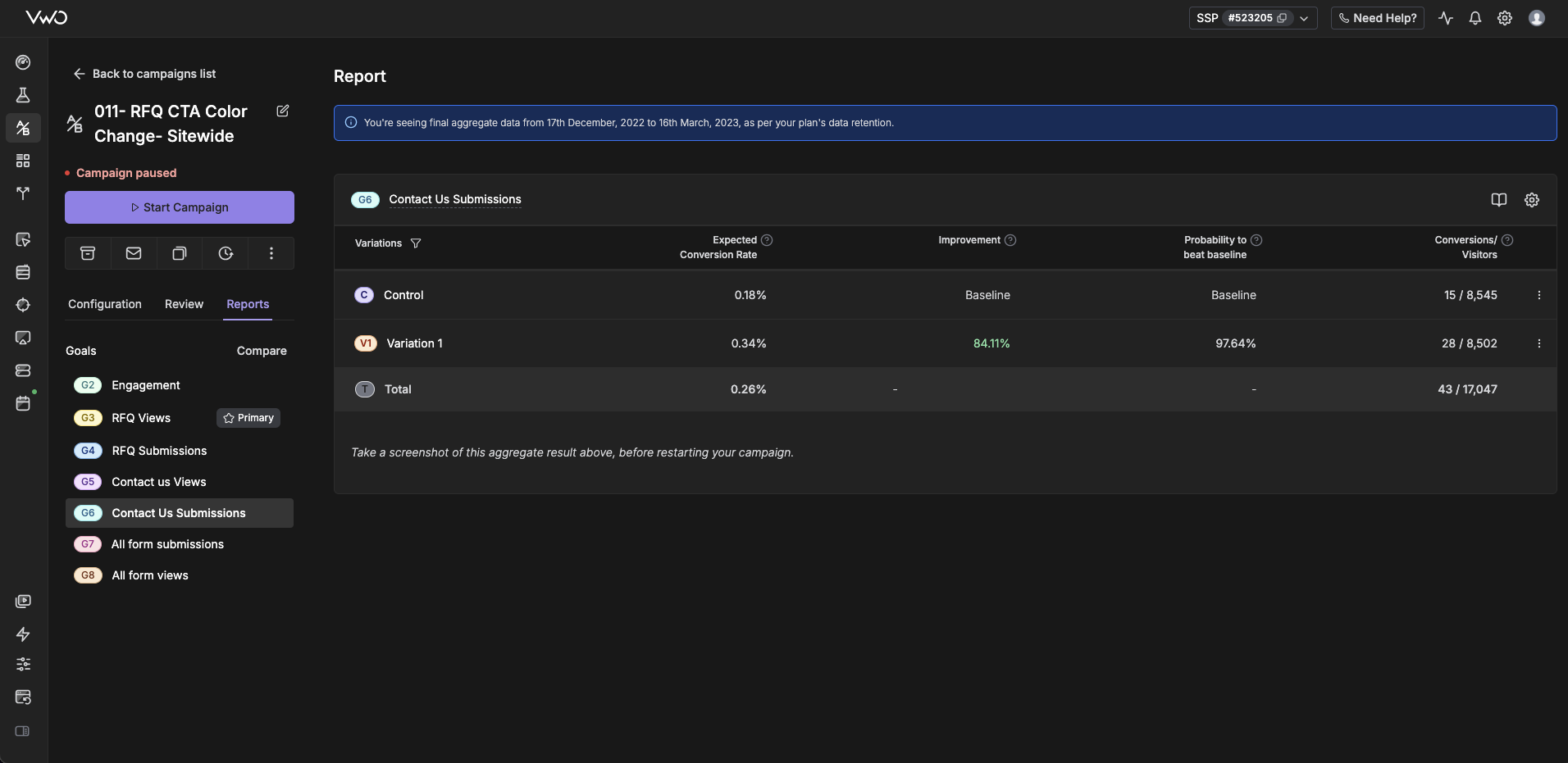

Success was measured using a mix of statistical significance, Bayesian analysis, and directional decision-making, supported by follow-up user testing. By the end of the program, experiments achieved a 61.7% win rate, while also producing durable learnings, clearer personas, and reusable patterns that informed future work

I demonstrated how user research can clarify A/B test findings and shape next steps.

Strategic hypothesis themes

Rather than testing the same ideas everywhere, clients were grouped based on shared issues uncovered through data and research. Common themes included:

Information architecture and taxonomy

If users could not easily find the right product or category, no amount of persuasion would help. Experiments focused on improving structure, naming, and findability.Product details and technical clarity

If users lacked access to critical specifications or documentation, they hesitated. Experiments tested clearer product information and supporting content that reduced uncertainty.Trust and credibility signals

In many cases, users needed reassurance before taking action. Experiments explored how trust elements such as company credibility, manufacturing details, and messaging around American-made or family-owned businesses affected behavior.

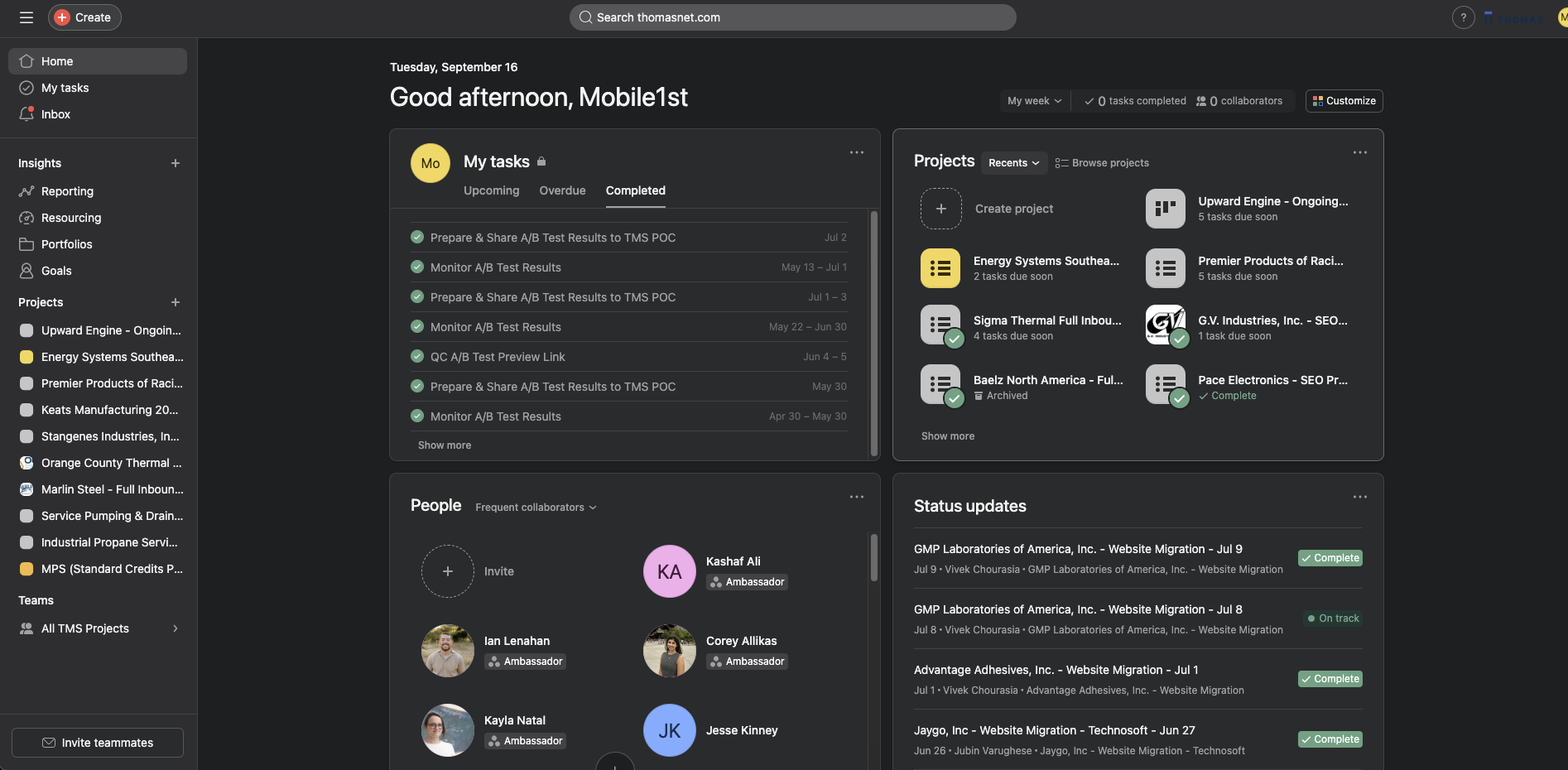

I worked within the client’s existing tools, translating Google Drive process flows into clear, actionable workflows in Asana.

05 | KEY DECISIONS

Several decisions shaped how this program scaled and how risk was managed across many B2B clients. These choices were less about optimization tactics and more about protecting trust, technical accuracy, and long-term maintainability.

Choosing larger, intentional experiments over small tweaks

Given lower traffic across many client sites, small visual changes were unlikely to produce reliable signal. Instead of testing minor adjustments in isolation, I grouped related updates into larger experiments that could answer meaningful questions about user behavior and decision-making.

Avoiding intrusive engagement patterns

We intentionally did not rely on popups, chatbots, or persistent floating CTAs. While these can sometimes increase short-term engagement, they often added noise without addressing core usability issues. The focus remained on improving the underlying experience rather than layering on interruptions.

Delaying high-commitment actions until trust was established

In several cases, stakeholders wanted request-a-quote forms placed directly on product pages. Testing showed that this was often too early for users who still needed reassurance, technical clarity, or proof of credibility. We prioritized building confidence before asking users to commit.

Balancing experimentation with technical and legal constraints

All design decisions had to respect technical accuracy, legal language, and brand credibility. Experiments were designed to work within these boundaries rather than around them, which limited flashier concepts but reduced risk across live production environments.

Pushing back on one-off redesigns

Some clients requested full page redesigns or complex, JavaScript-heavy builds. I pushed back when these approaches introduced unnecessary technical debt or would be difficult to maintain if they performed well. When possible, we favored simpler, more durable patterns that client teams could own long term.

Prioritizing scalable learnings over isolated wins

An experiment was considered successful not only when it improved performance, but when the learning could be reused across other clients. This mindset helped build a shared set of patterns and principles instead of a collection of disconnected test results.

06 | RESULTS & WHAT SHIPPED

By the end of the program, experimentation was no longer ad hoc. Thomas had a repeatable way to identify opportunities, validate design decisions, and apply learnings across a broad set of B2B clients.

Results

Experiments achieved a 61.7% win rate across participating clients

Directional and statistically significant improvements in conversion and lead generation

Clear increases in user confidence and engagement, supported by follow-up user testing

Stronger alignment between design decisions and business outcomes

Because traffic levels varied across clients, success was measured using a combination of statistical significance, Bayesian analysis, and directional signal. Results were validated not just through metrics, but through before-and-after user testing and qualitative feedback.

What Shipped

Reusable design patterns for pricing clarity, product details, and trust-building

Improved information hierarchy and taxonomy across multiple client sites

Downloadable content such as pricing charts and technical documentation that supported internal sharing and longer buying cycles

Clearer messaging around credibility, including manufacturing details and trust signals that consistently improved performance

Several learnings had impact beyond individual experiments. For example, downloadable pricing content had minimal effect on direct purchases but significantly increased qualified leads. Messaging that emphasized American-made products and family-owned businesses repeatedly improved trust and drove some of the strongest gains across sites.

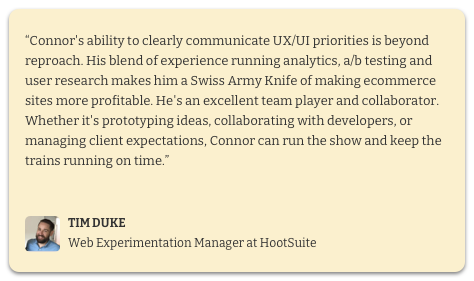

ABOUT ME

I am an accomplished Director of Optimization and UX Strategy with over 10+ years of expertise in UX/UI design, user research, and optimization.